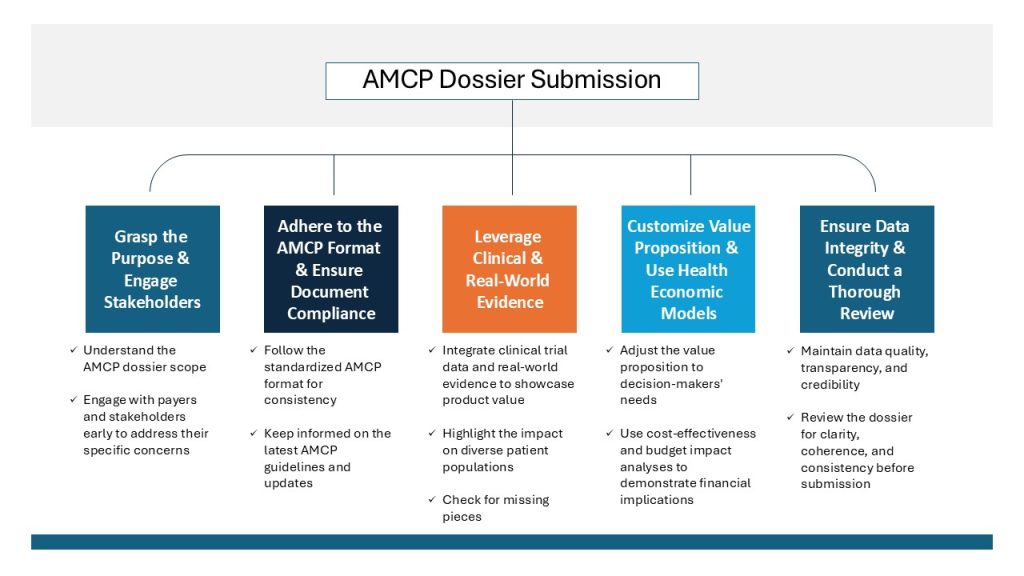

Healthcare decision-making requires a good knowledge of value communication and assessment. The AMCP (Academy of Managed Care Pharmacy) dossier submission is an important instrument for market access, health economics, and outcomes research professionals. This is a key to ensure that payers and formulary committees who are healthcare decision makers will understand the true value of what you have to offer them. This is how you can make an impact with your AMCP submission whether you are a seasoned professional or are new to the process.

1. Grasp the Purpose

The AMCP dossier isn’t just something done for solely for regulatory purpose; it’s a tool for strategic communication. It is done by providing comprehensive and evidence-based information on the value of your product which includes its clinical effectiveness, safety profile, economic consequences.

2. Adhere to the AMCP Format

The AMCP requires a standardized format for dossier submissions, which is essential for maintaining consistency and enabling comparability across various products. Generally, dossiers are divided into three main parts:

- Executive Summary : Provides a concise overview of the product’s value proposition, highlighting key clinical and economic outcomes.

- Clinical Evidence : Contains exhaustive synopsis of clinical trials, observational studies, and other relevant data that demonstrate the product’s efficacy and safety.

- Economic Evidence : Includes cost-effectiveness analyses (CEA), budget impact analysis (BIA), and other economic evaluations that underscore the financial implications of adopting the product.

3. Leverage Real-World Evidence

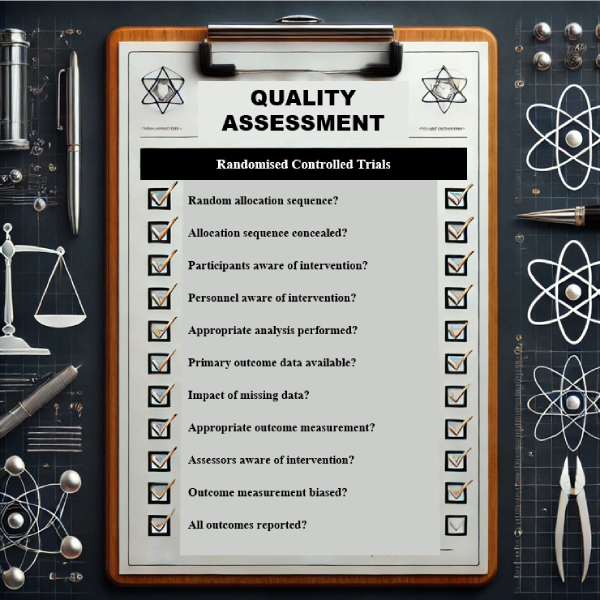

While randomized controlled trials (RCTs) remain the gold standard for clinical evidence, the importance of real-world evidence (RWE) in the AMCP dossier cannot be understated. RWE offers valuable insights into how a product performs in routine clinical practice, enabling payers to assess its impact across diverse patient populations.

4. Customize the Value Proposition

Payers and decision-makers seek a comprehensive understanding of value beyond just efficacy. Customize your value proposition to address the specific concerns of your target audience. Emphasize outcomes that matter most to them, such as reducing hospitalizations, enhancing patient quality of life, or delivering cost savings.

5. Uphold Data Integrity and Transparency

The credibility of your AMCP submission relies on the quality and transparency of the data presented. Ensure that all data sources are reputable and that methodologies are thoroughly documented. Payers must have confidence that the evidence provided is both robust and impartial.

6. Stay Informed on AMCP Guidelines

As the healthcare landscape evolves, so do the guidelines for AMCP submissions. Regularly update yourself on the latest changes to the AMCP Format for Formulary Submissions, as these updates can significantly influence the evaluation of your dossier.

7. Leverage Health Economic Modelling

Health economic models, such as CEA and BIA, are vital elements of the AMCP dossier. These models offer quantitative insights into the product’s value in terms of both health outcomes and financial implications. Ensure your models are rigorously designed, transparent, and closely aligned with payer expectations.

8. Engage Stakeholders Early

Initiate early engagement with payers and key stakeholders during the development process. Gaining insight into their specific needs and concerns allows you to tailor your AMCP submission to effectively address their questions and challenges. This proactive approach also helps to build relationships and trust, which are essential when your dossier is under review.

9. Conduct a Thorough Review

Before finalizing your dossier, ensure it undergoes a comprehensive review. Focus on clarity, coherence, and consistency throughout the document. A meticulously organized and polished submission is more likely to make a strong, positive impression on decision-makers.

Conclusion

Mastering the AMCP dossier submission process is paramount to communicating effectively the value of your product to healthcare decision-makers. By following the format required, integrating real-world evidence, customizing the value proposition, and maintaining data integrity, you will be able to develop a compelling dossier that differentiates your product within a competitive marketplace. Refine your approach periodically regarding any new changes in the industry and be sure that your submissions are impactful and in accordance with the changing standards.